What Donors Should Look For in Impact Audits That Hold Up

TL;DR

- Follow the method, not the mood.

- Prefer outcomes over outputs, plus full-cost numbers.

- Trust audits that show limits, misses, and independent review.

- In 2026, data security and compliance belong in the picture too.

A glossy report can flatter a donor. A real impact audit should do something harder, show what changed, how the charity knows, and where the evidence is thin.

If you’re giving serious money, good intentions aren’t enough. You need an audit that can survive daylight, because weak evidence wastes funds and time. Start with the parts many organisations tuck into the small print.

Method first: can you trace each claim back to evidence?

Strong impact audits show what was measured, who measured it, when, and against what starting point. They name the baseline, time frame, sample size, and data source. If a claim says school attendance rose, the audit should say by how much, over what period, and in which group.

Better audits also say whether they used before-and-after data, a comparison group, or third-party records. Without that, attribution gets slippery. A charity may have helped, but an audit should not claim full credit unless the evidence can carry that weight.

Good audits define their terms. “Reached” can mean a flyer was handed out. “Completed” can mean one session. “Improved” can mean almost anything if the measure stays fuzzy. Clear language matters because donors often fund based on a few headline claims.

Many reports still miss this mark. A recent analysis of weak impact reports points out that long, vague documents rarely build donor trust. If you want a sharper lens, the High Impact Giving Toolkit is helpful because it pushes donors to examine the strength of evidence, not the size of the headline.

If you can’t follow a number back to its source, treat it as marketing.

When the method is thin, even lovely results become guesswork. Some reports are polished enough to distract you from what they do not say. Don’t reward that.

Strong impact audits measure change, not activity

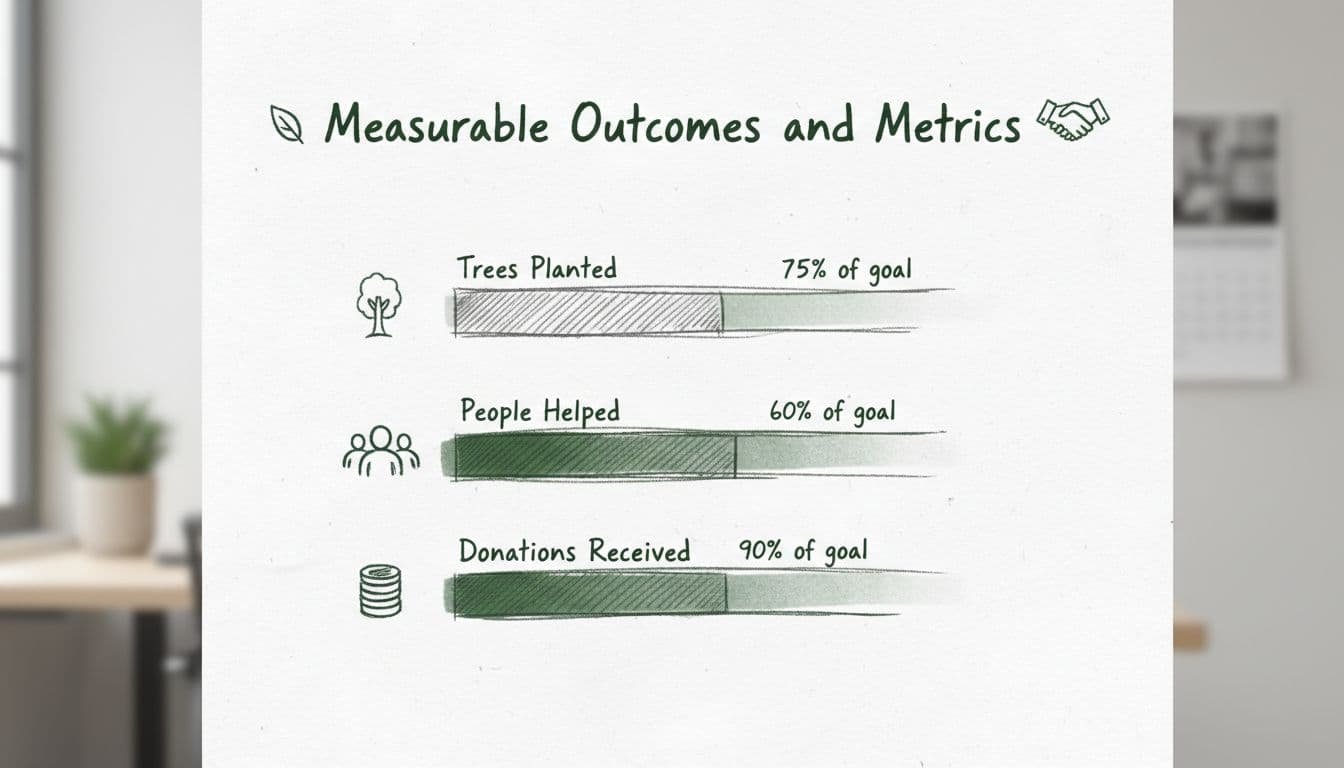

Outputs are work done. Outcomes are change created. Planting 5,000 trees is an output. Tree survival after 24 months is an outcome. Serving 10,000 meals is activity. Lower food insecurity scores are impact. Donors should want the second line, plus the first.

You should also look for cost per meaningful result. “Low cost” tells you nothing. “₹800 per student retained for a full year” tells you something. So does a breakdown by location, gender, age, or risk group. Broad averages can hide who was left behind.

This quick comparison helps sort useful audits from flattering ones:

| Audit feature | Good sign | Red flag |

|---|---|---|

| Numbers | Baseline, sample, period | Big totals only |

| Outcomes | Tracks real change | Counts activity only |

| Cost | Full cost per outcome | Cheap per head only |

| Honesty | Shows misses and limits | Near-perfect success claims |

A credible audit does not try to win every row.

Time matters as well. Some change arrives late. CEP’s three-year study on large unrestricted gifts found that stronger results can appear over several years, because organisations often use flexible funding to build staff, systems, and stability before programme gains show up. So a one-year snapshot can miss the plot. Still, the audit should say that plainly instead of pretending certainty.

Transparency and independent checks separate evidence from spin

A credible impact audit tells you who reviewed the work and how independent that reviewer was. If the same consultancy designed the programme, collected the data, and signed it off, keep your eyebrows up. Independence does not need theatre. It needs clear scope, clean documentation, and disclosed limits.

You also want the appendix, not only the executive summary. Good audits show assumptions, missing data, failed targets, and changes made after earlier findings. For large grants, due diligence for major gifts should cover governance, compliance, and programme evidence together. A solid charity audit framework guide also ties outcomes to costs, which keeps impact talk grounded.

That standard matters more in 2026. Nonprofits face tighter grant rules, more cyber risk, and more pressure to connect impact reporting with finance. For family foundations, boards, and ESG teams, the practical question is simple: could this audit survive a board challenge, a site visit, and a data-security review? It has more weight when the board actually used it to change strategy, staffing, or grant terms.

Before you fund, keep four requests handy:

- Request the last two audit cycles, not one polished year.

- Check who paid the reviewer and who held the raw data.

- Read the management response, if there is one.

- Notice whether the audit changed any decision.

The final test is honesty

The best impact audits do not flatter the donor or the charity. They reduce blind spots. Clear methods, useful outcome measures, independent checks, and visible limits are what make an audit worth reading.

If you prefer funding where the path from gift to field action is easier to inspect, see Contribute to Active Missions. It supports zero-leakage work such as bird nests and mangrove planting, with 100% of funds going to the field.