How Geo-Tagged Impact Reports Work on the Ground

TL;DR: A geo-tagged impact report links project evidence to a real place, a real time, and a review trail. When it’s done well, it helps donors, boards, CSR teams, and auditors trust the work without having to take anyone’s word for it.

Anyone can paste smiling photos into a PDF and call it impact. The problem is proof. You need to show that the work happened where you said it did, when you said it did, and that it led to something more than a one-day photo op.

That’s why geo-tagged reporting has become standard practice for many NGOs, CSR teams, and ESG communicators. The idea sounds technical. The mechanics are plain enough once you strip away the jargon.

What a geo-tagged report is really proving

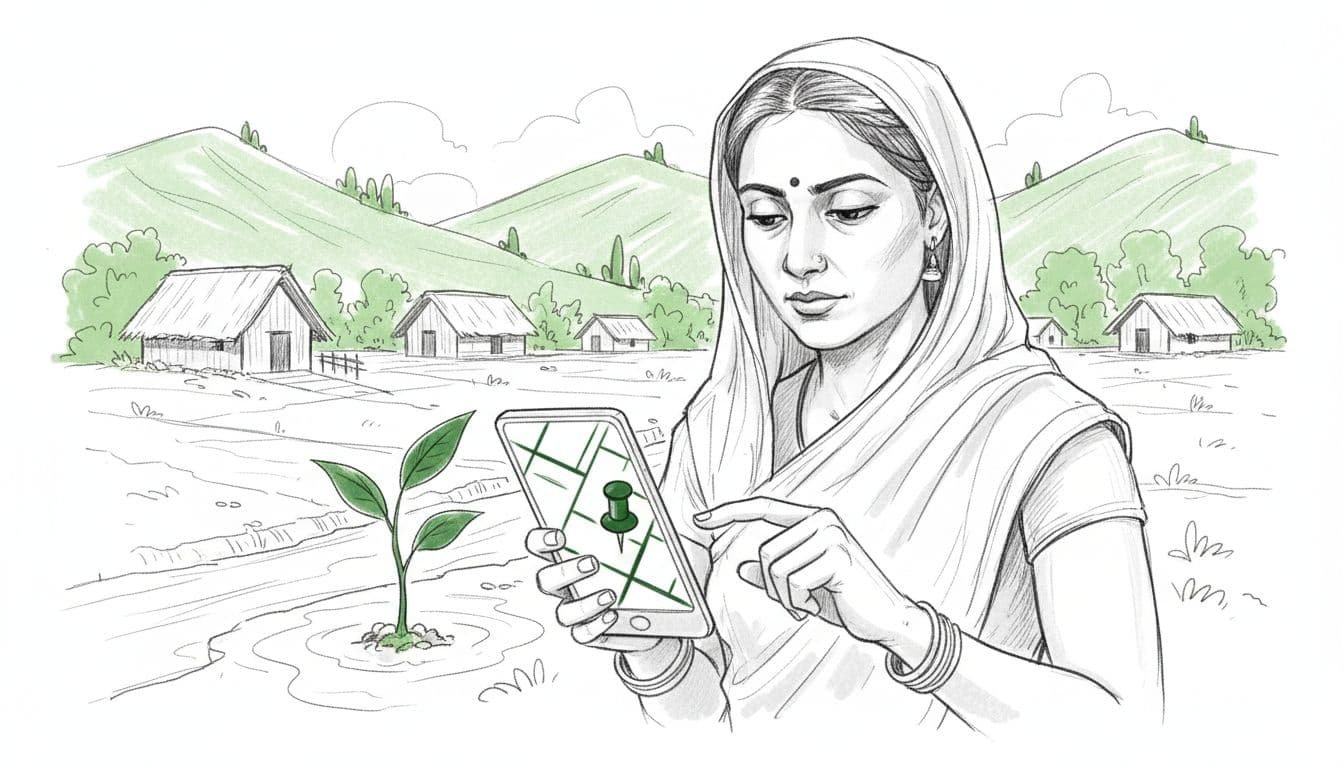

A geo-tag is location data attached to a photo, form entry, asset record, or site visit. Most teams collect it through a mobile app, along with the date, time, project ID, field notes, and media. On its own, that location pin doesn’t prove much. Combined with the rest of the record, it starts to tell a believable story.

That story usually answers four questions: what happened, where it happened, when it happened, and what changed afterwards. A good report doesn’t stop at “tree planted” or “toilet built”. It ties each claim to evidence that another person can trace back to the field.

You can see that logic in this Reliance Foundation geo-tagging case study, where water-harvesting structures were mapped with coordinates, dimensions, water availability, dates, and related project data. That’s not decoration. That’s documentation.

The same applies to long-term environmental work. A plantation project with geo-tags but no survival checks is half a report. A stronger example is the tree geo-tagging audit that tracked mortality, species, and yields across locations over time.

A blunt truth helps here: a map pin is not the outcome.

What it gives you is verifiable presence. It says, “someone recorded this intervention at this location”. To prove impact, you still need follow-up data, beneficiary context, and enough honesty to show what didn’t go to plan.

How the reporting process works on the ground

The workflow is less glamorous than the reports it produces. That’s a good thing. Reliable reporting is built on repeatable routines, not magic.

Field staff visit the site with a phone or tablet. They open a form, capture a photograph, allow GPS access, add notes, and log the activity or asset. Depending on the programme, they may also record beneficiary counts, species, materials used, or maintenance status.

In practice, the process usually follows five steps:

- Teams capture a baseline, often before work starts.

- They log the intervention with photos, coordinates, and field details.

- The records sync to a central dashboard, sometimes later if the site is offline.

- A reviewer checks for gaps, duplicates, and obvious errors.

- The approved data is turned into maps, summaries, and donor-ready reporting.

That’s the neat version. Real fieldwork is messier. Signal drops. Phones drift a few metres. Staff forget to switch location services on. A blurred photo sneaks through. Someone uploads six records from the same spot because they were standing under the only patch of shade.

This is where discipline matters. Reviewers need to check whether coordinates match the site description, whether timestamps make sense, and whether the evidence supports the claim. Some organisations layer this with spot checks, sample revisits, or third-party review. The Earth5R write-up on CSR reporting and geo-tagging points to that wider mix of geo-tags, audits, dashboards, and monitoring.

The boring bits, standard forms, training, consent, review rules, are what keep the flashy bits honest.

Why some reports hold up and others don’t

Stakeholders trust geo-tagged impact reports when the evidence lines up with common sense. If a borewell is meant to support a dry settlement, the location should fit that story. If a sanitation project claims lasting use, one inauguration photo won’t cut it. You need repeat visits, maintenance records, and a reason to believe the asset still works six months later.

By 2026, this matters even more because funders want less theatre and more traceability. Geo-tagged reporting helps show where money is going, where work is clustered, and where gaps remain. That’s useful in India, where programme activity often piles up around better-funded regions and more visible corporate networks.

Some project partners now build that transparency into the offer itself. For example, this geo-tagged plantation reporting example describes digital monitoring, coordinates, and recurring proof for CSR tree programmes. The appeal is obvious: clearer reporting, fewer audit headaches, and less room for soft claims.

Still, there are limits. GPS accuracy is not perfect. A geo-tag can be spoofed. A report can drown readers in pins and still avoid the hard question: did people’s lives improve?

There are privacy issues too. Publishing exact household coordinates for children, survivors, or vulnerable communities is sloppy at best and harmful at worst. Sometimes village-level tagging is enough. Sometimes site clustering is the safer choice.

The strongest reports don’t hide behind maps. They combine place, time, proof, and follow-up. They show the intervention, explain the outcome, and admit the weak points before a sceptical stakeholder does.

The proof has to travel further than the photo

The whole point of geo-tagged reporting is trust you can test. Not trust built on branding, warm words, or one tidy dashboard screenshot.

If you’re building these reports, keep the standard simple: can another person trace the claim back to the field and see whether it holds up? If yes, the report is doing its job. If not, it’s only polished paperwork.

For a live example of field-first, place-linked transparency, you can Contribute to Active Missions. The best reports don’t ask for blind faith. They make verification feel normal.